The Anselm Project has a new report type: apologetics. I've been working on it for a while, and it's finally ready. You can see a sample report on the question "Why does God allow suffering?" here.

What an Apologetics Report Actually Does

The idea behind apologetics reports is different from the passage, topical, or Synod reports you may already be familiar with.

Instead of analyzing a text or deliberating on a theological question round-robin style, an apologetics report takes a single question and routes it through nine distinct schools of apologetic thought. Each school gets the same question and produces its own complete analysis — its methodology, core arguments, the strongest objections against it, its rebuttals to those objections, and its scriptural foundation.

The nine schools are Classical, Evidential, Presuppositional, Reformed Epistemology, Cumulative Case, Experiential/Existential, Scientific/Intelligent Design, Cultural/Narrative, and Moral apologetics. That covers the major traditions — Van Til and Bahnsen's presuppositionalism sits alongside Plantinga's Reformed Epistemology, Habermas's evidential minimal-facts approach, and Swinburne's cumulative case probabilism. They're not always friendly to each other, and the report doesn't pretend they are.

After all nine schools complete their analysis, a synthesis AI reads the full output and produces a master summary: where the schools agree, where they genuinely contradict each other, which approach works best for the specific question asked, and what unresolved questions remain even after the best answers are given.

What Makes It Worth Using

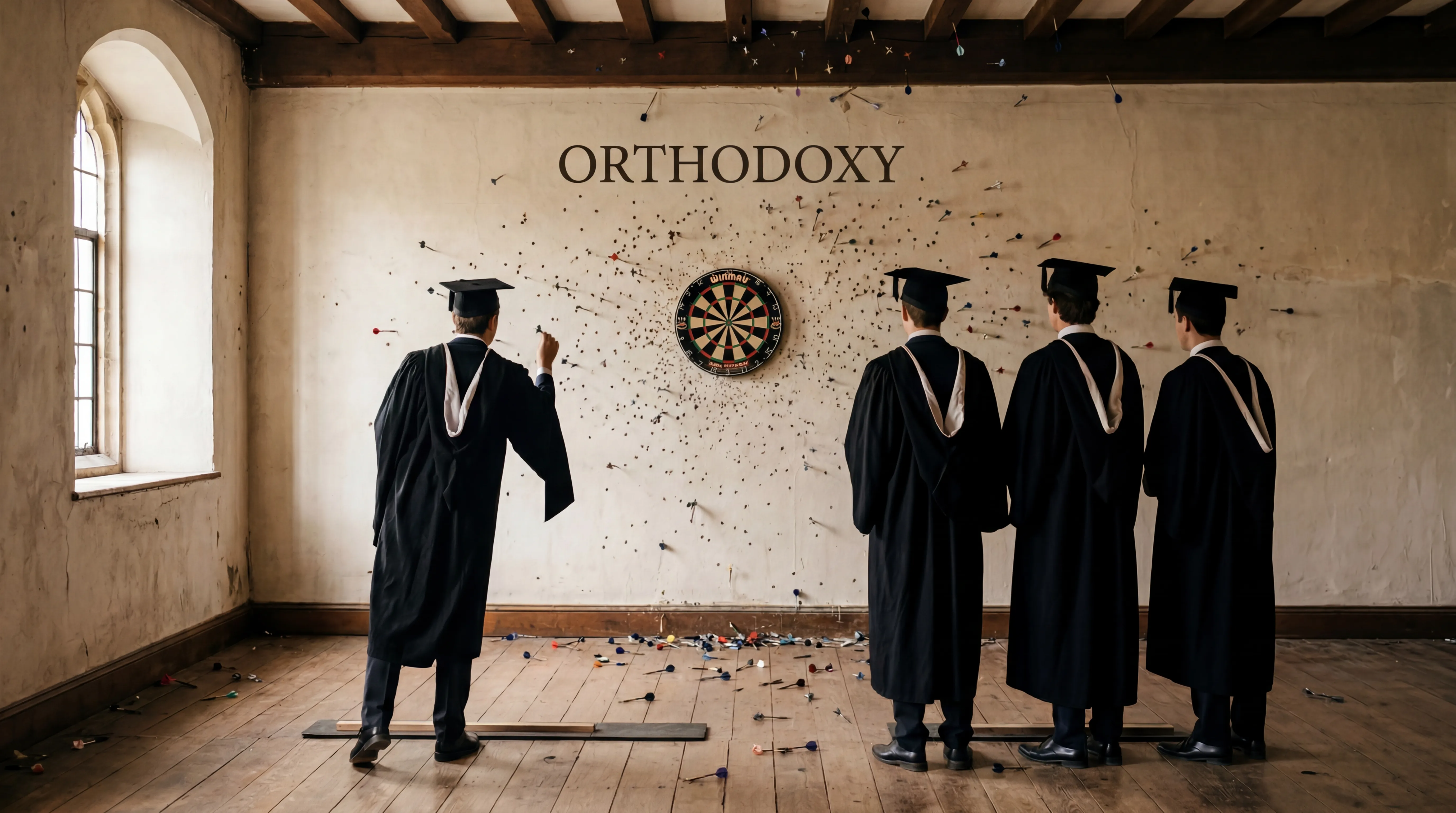

I'll be honest — I wasn't sure this would work. Apologetics is a domain where AI tends to flatten things. It gives you the standard talking points, hedges everything, and doesn't commit. That's not useful for a pastor or a seminarian who needs to actually engage a skeptic or a doubting member.

So I put significant effort into the prompting to force the AI to do three things it usually avoids: steelman objections, name real thinkers and real arguments (without fabricating citations), and identify genuine contradictions between schools rather than pretending they all arrive at the same place.

The report on suffering is a good test case because it's the hardest question in apologetics. Plantinga's free will defense handles the logical problem of evil — it shows that God and moral evil are not logically inconsistent. Rowe's evidential problem is different and harder, and the report doesn't let any school off the hook for it. The objections section for each school requires a steelmanned version of the objection — the strongest possible form of the critic's case — before the school responds.

That discipline produces something more useful than a summary of theodicy positions. You see where each school is genuinely strong, and you see where it runs out of road.

The Pipeline

For those interested in how it works technically, the report runs 38 API calls in total. Each of the nine schools generates four phases in parallel: a core response, a deep argumentation section, an objections and rebuttals section, and a scriptural foundation. After all 36 school analyses complete, a single synthesis call reads the full context and writes the master summary.

This is related architecturally to the Synod, which also uses multiple AI agents interacting with a question. The difference is that the Synod is a live deliberation — experts respond to each other in sequence. The apologetics report is more like a reference work — each school works independently, and the synthesis draws comparisons after the fact.

The token count is significant. This is not a cheap report to generate, and I've priced it accordingly at a higher credit cost than a standard passage report. I'm still monitoring the costs and may adjust as I gather more data.

What It's Good For

The most obvious use case is sermon and teaching prep on objections to the faith. If you're preaching through 1 Peter and you know you'll hit the suffering question, this gives you a map of the apologetic terrain rather than a single answer you might have already encountered.

It's also useful for anyone doing direct apologetics work — campus ministry, evangelism, conversations with skeptics. The comparative matrix in the synthesis tells you which school is likely most effective for a given question and audience. That's practical guidance, not just academic taxonomy.

The further reading section at the end gives you thinkers to explore, organized by their contribution to the specific question. It's not a comprehensive bibliography — I explicitly told the AI not to fabricate book titles or publication years — but it names the figures and describes their key arguments, which is enough to start.

If you want to see what it looks like before generating one of your own, the Share Gallery has the suffering report available publicly. No login required.

A Few Honest Caveats

No AI system handles every question equally well. The apologetics engine is strongest on questions with a substantial philosophical literature — existence of God, the problem of evil, the resurrection, the reliability of Scripture. Questions at the fringes of apologetics, or highly technical exegetical questions, are better served by a Synod or a scholarly passage report from the report creation page.

I'm also aware that some schools of thought are better represented in the training data than others. Presuppositionalism, for instance, is a smaller tradition with a more specific vocabulary, and I've paid attention to making sure it gets represented on its own terms rather than being filtered through an evidentialist lens. I think it works, but I'd welcome feedback from anyone with a deep background in that tradition.

The gatekeeper validation at the front end blocks obvious nonsense, but it's not perfect. The question has to be a genuine apologetics question — a defense or explanation of the Christian faith — and it has to be specific enough to produce a meaningful analysis. "Tell me about Christianity" won't get through. "Is the resurrection historically credible?" will.

You can generate an apologetics report from the report creation page. If you generate one worth sharing, post it to the gallery.

God bless, everyone.

Scripture References

Key Terms

The reasoned defense and explanation of the Christian faith against objections and alternative worldviews.

An apologetic method arguing that Christian theism is the necessary precondition for intelligible experience and rational thought.

The view, associated with Alvin Plantinga, that belief in God can be properly basic and does not require external evidence to be rational.

A defense of God's goodness and justice in the face of the existence of evil and suffering.